|

1/28/2024 0 Comments 010 editor change encoding

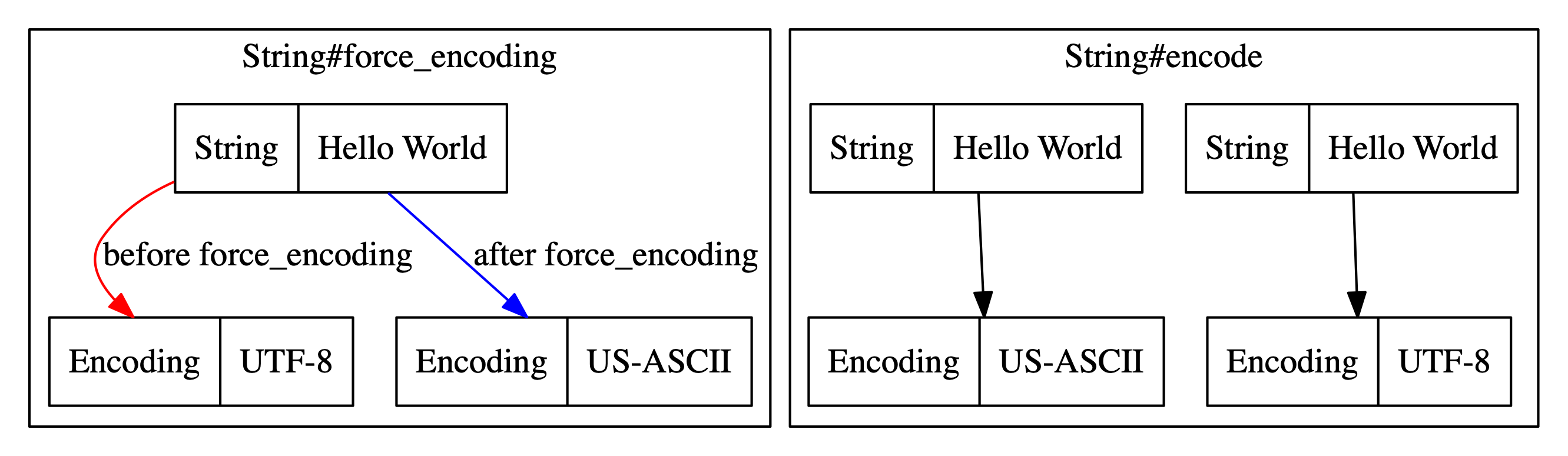

Then this result (byte 2) is XORed with the value of byte 3, and that result is stored as byte 3. It looks like that if I combine Tabular Editor (where I set Encoding Hint to be Value) together with Power Query Editor (where I do the following steps: right click on the decimal column -> Transform -> Round -> Round -> and set Decimal Places 1), VertiPaq uses Value as encoding type. The encoding method is as follows: the values of byte 1 and 2 are XORed and the result is stored as byte 2. The default malformedInputAction for the CharsetDecoder is REPORT, and the default malformedInputAction of the default decoder in InputStreamReader is REPLACE. Like my XORSelection.1sc script, it encodes/decodes with the XOR operator. REPORT will throw a MalformedInputException.REPLACE will replace the malformed characters in the output buffer and resume the coding operation.IGNORE will ignore malformed characters and resume coding operation.There are three predefined strategies (or CodingErrorAction) when the input sequence has malformed input: In other words, the given byte sequence has no mapping in the specified Charset. When we decode a byte sequence, there exist cases in which it's not legal for the given Charset, or else it's not a legal sixteen-bit Unicode. Since each code point is coded on one byte minimum, the problem of endianness does not arise with UTF-8, unlike UTF-16, where the BOM, in addition to potentially allowing game detection, is mainly used to indicate how to read the file. This speeds up indexing and calculation of the number of code points in case the text does not contain extra characters.Īs for the BOM (Byte Order Mark), it is neither required nor recommended with UTF-8 usage because it serves no purpose except to mark the start of a UTF-8 stream. On other hand, UTF-16 is more adequate with BMP (Basic Multilingual Plane) characters that can be represented with 2 bytes. Moreover, many common characters have different lengths, which makes indexing by code point and calculating the number of code points with UTF-8 terribly slow. Using only ASCII characters, a file encoded in UTF-16 would be about twice as large as the same file encoded in UTF-8. This has a huge impact on the size of encoded files. As both are variable-width encoding, they can use up to four bytes to encode the data, but when it comes to the minimum, UTF-8 only uses one byte (8 bits) and UTF- 16 uses 2 bytes (16 bits). They differ only in the number of bytes they use to encode each character. UTF-8 and UTF-16 are just two of the established standards for encoding. Now, character ‘T' has a code point of 84 in US-ASCII (ASCII is referred to as US-ASCII in Java). mapToObj(e -> Integer.toBinaryString(e ^ 255)) Return IntStream.range(0, encoded_input.length) Let's define a simple method in Java to display the binary representation for a character under a particular encoding scheme: String convertToBinary(String input, String encoding)īyte encoded_input = Charset.forName(encoding) This still leaves one bit free in every byte!ĪSCII's 128-character set covers English alphabets in lower and upper cases, digits, and some special and control characters. This essentially means that each character in ASCII is represented with seven-bit binary numbers. Unfourtunately this configuration does not work if you set Decimal places to be more than 1 even if you have only 200,000 rows in the table.One of the earliest encoding schemes, called ASCII (American Standard Code for Information Exchange) uses a single-byte encoding scheme.

It looks like that if I combine Tabular Editor (where I set Encoding Hint to be Value) together with Power Query Editor (where I do the following steps: right click on the decimal column -> Transform -> Round -> Round -> and set Decimal Places 1), VertiPaq uses Value as encoding type. The problem is related with the number of digits that the decimal column has, in my case the values imported via OData have 6 digits. I tried multiple scenarios and found one that seems to work if I have more then 448,000 rows in the table. It looks like the decimal column type is the potential issue and I don't know if this a bug in VertiPaq since other decimal columns have the encoded type correctly set to Value. Of course, this is not a solution because I'm losing values but just wanted to double check if the issue is related with the number of values in the table or with the decimal date type. Just for test, I changed the column date type from decimal to whole number and after I refreshed the report in VertiPaq the column was set as Value encoded.

The column is still Hash encoded even if the total number of rows in the table is only 48,640. I have done all the steps from the provided thread and I also tried to isolate one particular table by loading it separately in a new report, but it didn't work.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed